Blog

Introducing EPIC: Your AI Quality Companion

Discover EPIC by TAUS: an AI-driven tool combining Quality Estimation and Automatic Post-Editing for optimized, cost-effective, and high-quality translation workflows. Try it out today.

Is Quality Estimation the next big thing in translation? Learn how QE improves MT accuracy, streamlines workflows and saves costs for LSPs and enterprises.

Feb 13, 2025

Unlock cost-effective translation workflows using Quality Estimation and Large Language Models for faster, high-quality results and discover how to optimize your translation process.

Sep 17, 2024

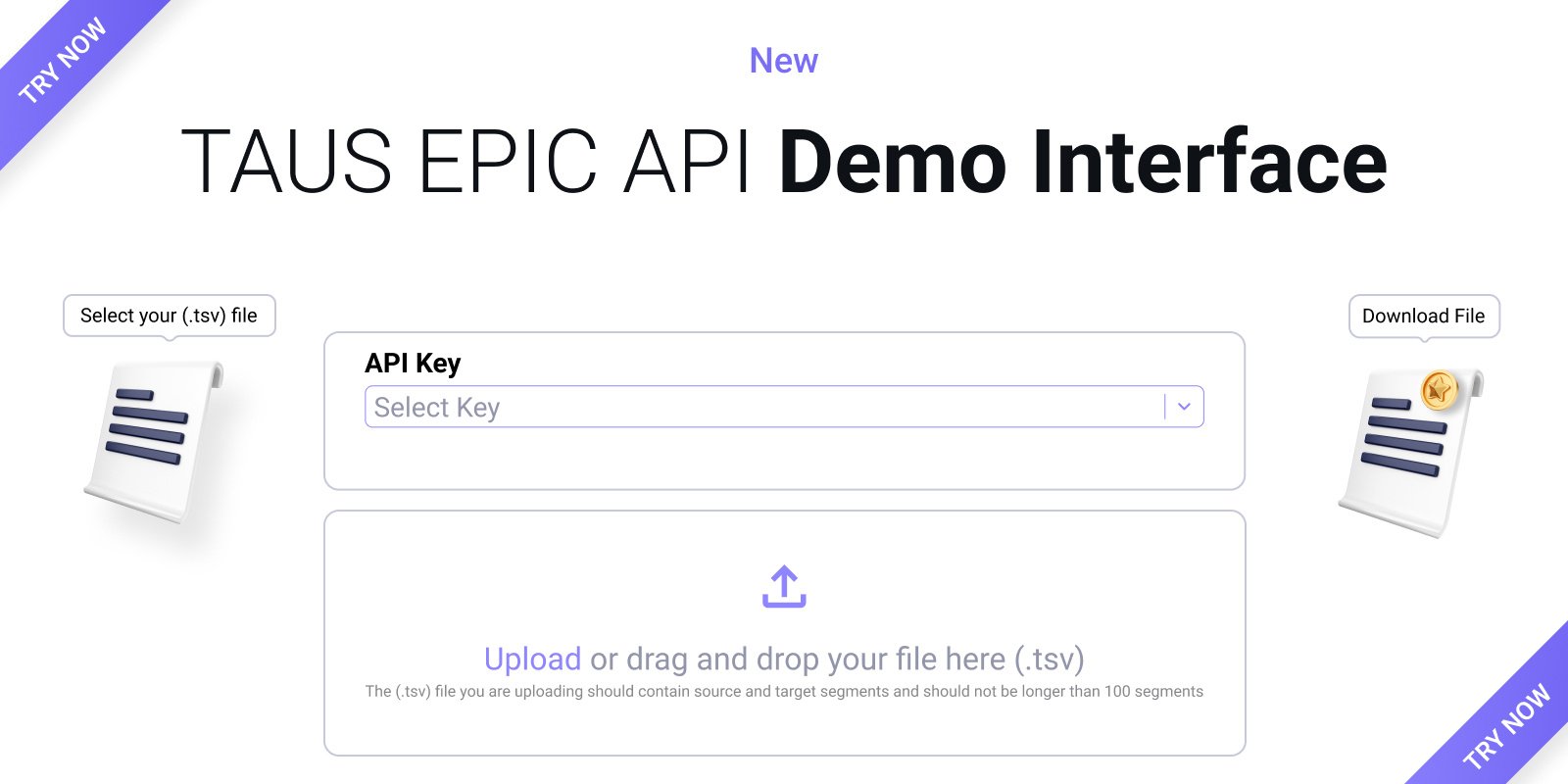

Discover the new TAUS EPIC API Demo Interface to quickly assess machine translation quality, saving time and costs while boosting productivity.

Aug 08, 2024

MORE BLOGS

AllEventsFood for ThoughtLanguage DataMachine TranslationPress ReleasesQuality EstimationSpeech Data

Discover EPIC by TAUS: an AI-driven tool combining Quality Estimation and Automatic Post-Editing for optimized, cost-effective, and high-quality translation workflows. Try it out today.

Mar 26, 2025

Is Quality Estimation the next big thing in translation? Learn how QE improves MT accuracy, streamlines workflows and saves costs for LSPs and enterprises.

Feb 13, 2025

Celebrating the 20th anniversary of TAUS this month caused the team to look back at the predictions and outcomes so far. What have we achieved? What went wrong?

Nov 21, 2024

Unlock cost-effective translation workflows using Quality Estimation and Large Language Models for faster, high-quality results and discover how to optimize your translation process.

Sep 17, 2024

Discover the new TAUS EPIC API Demo Interface to quickly assess machine translation quality, saving time and costs while boosting productivity.

Aug 08, 2024

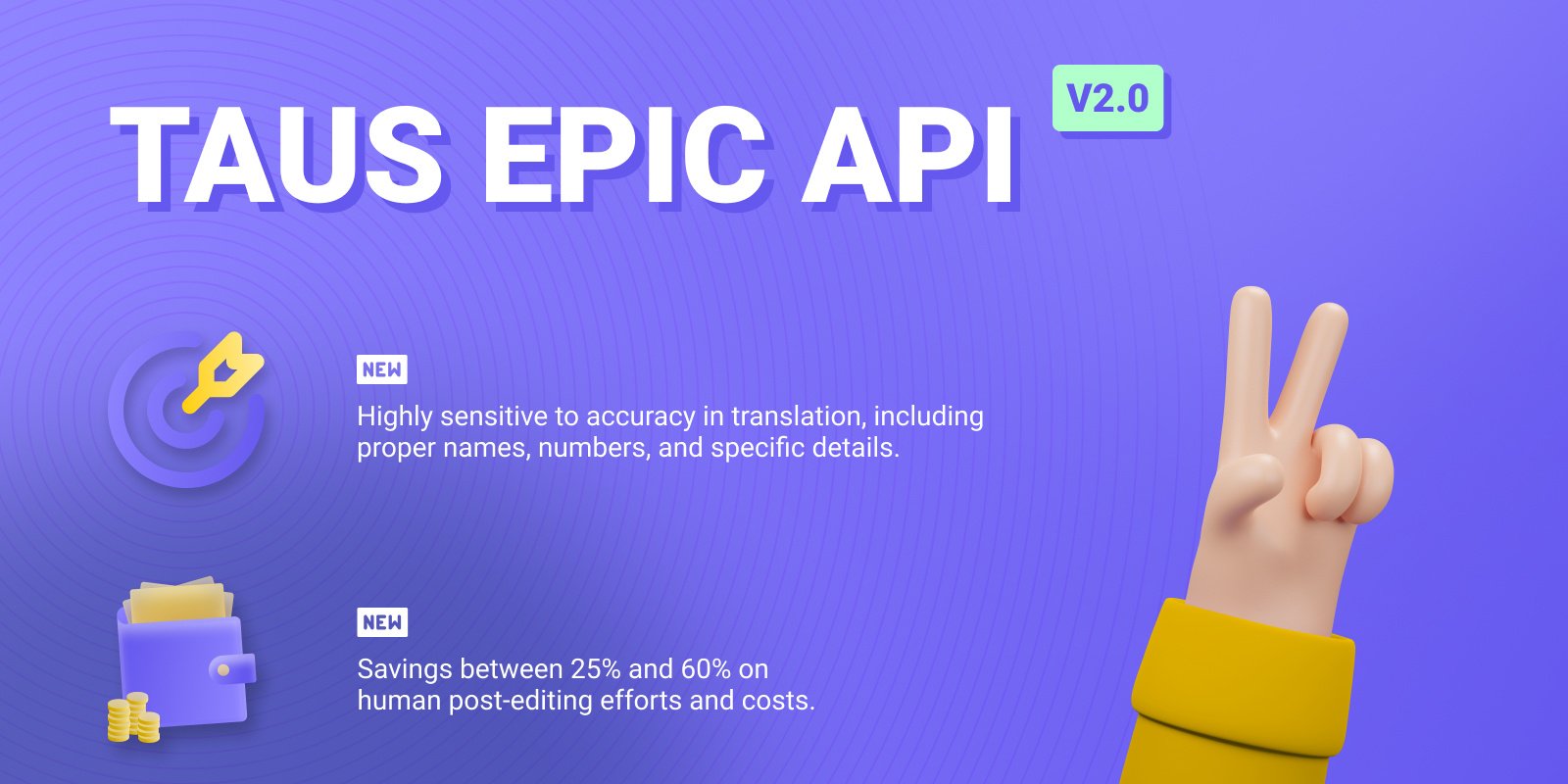

Discover the enhanced TAUS EPIC API V2 for improved translation accuracy in 5 EU languages, reducing post-editing efforts by up to 60%.

Jul 29, 2024

The evolution of the language industry over the past two decades includes a transition from rule-based Machine Translation to the integration of AI. Learn more about how two industries converge at the TAUS conferences in Rome and Albuquerque this year. keywords- taus, language technology, large language models, translation, machine translation, global communication, ai, conference, translation conference, ai conference

Apr 02, 2024

Purchase TAUS's exclusive data collection, featuring close to 7.4 billion words, covering 483 language pairs, now available at discounts exceeding 95% of the original value. keywords- taus data, language data, large language models, translation, machine translation, global communication, ai, language technology training, data training

Mar 11, 2024

Find out how companies integrate QE into their workflows and explore real-world use cases and benefits of quality estimation. From mitigating risk in global chat communication to minimizing post-editing in machine translation workflows.

Jan 31, 2024